Jack Dorsey Will Fail

Org structure is rarely a decisive advantage, but a bad one is a big disadvantage…

Jack Dorsey and Roelof Botha recently argued that AI can replace the coordination function of middle management entirely. Replace the hierarchy with an AI driven “world model” of company operations and customer behavior. Let an “intelligence layer” compose solutions autonomously. Push the remaining humans to “the edge.” The company becomes, in Dorsey’s phrase, “an intelligence.”

The argument rests on a premise that sounds self evident but is quietly wrong. Dorsey treats coordination as an information problem. If managers exist primarily to route information through layers of hierarchy, and AI can route information faster, then managers are an impedance to be removed. But managers do something that is explicitly not an information function. They make commitments. A manager who tells an employee “I will go to bat for you on this promotion” is not routing data. A manager who tells a partner “I am personally guaranteeing we will deliver” is not processing a signal. A manager who sits across from someone being terminated and says “I want to help you land well” is not coordinating a workflow. These are acts of accountability, reciprocity, and institutional commitment that create the trust on which organizations actually run. No world model generates trust. No intelligence layer makes a promise. That is the gap at the center of the Dorsey thesis, and it is structural, not temporary and is something I find many neurodivergent CEOs fail to understand…

It is also worth noting what this essay is. It is a vision statement, not a case study. Block has not implemented this model and demonstrated results. No product has shipped under this structure. No customer outcome has been measured. Dorsey published it days after the largest AI attributed workforce reduction in corporate history, and his own framing has shifted dramatically. In his March 2025 layoff memo he stated the cuts were “not about replacing folks with AI.” Eleven months later, the narrative reversed entirely. Even Sam Altman has acknowledged that some companies engage in “AI washing,” and a Resume.org survey found that 59% of hiring managers admit to emphasizing AI in layoff messaging because it plays better with stakeholders. Before circulating this essay internally with a note that says “we need to talk about this,” consider that it describes an untested theory authored by a CEO who tripled his headcount during the pandemic, acknowledged the overhiring, and then rebranded the correction as visionary strategy.

The question is whether the model can travel. For most companies, even well run ones, the answer is no. The reasons are structural, not operational, and they cluster around three gaps that would constrain companies far more sophisticated than the one described here.

To ground the analysis, consider Acme Inc., a well managed enterprise software firm of 2,500 employees with government and commercial contracts, a data analytics platform, and a profile resembling a smaller Palantir or Datadog. Acme ships on schedule, exceeds retention benchmarks, uses AI in its engineering workflow, and thinks seriously about organizational design. It is the kind of company whose board reads the Dorsey essay and asks the CEO to respond.

A brief translation first. A “world model” is a continuously updated, AI readable representation of everything happening inside a company and with its customers. Instead of a product manager hypothesizing that small restaurants need faster access to short term loans, Block’s customer world model would detect from transaction data that a specific restaurant’s cash flow is tightening ahead of a seasonal pattern, and the intelligence layer would compose a loan offer automatically. Implementation requires making all work machine readable (engineers resolve questions in written threads rather than hallway conversations, so the AI has something to read), building data infrastructure that continuously maps who is building what and where resources are misallocated, redesigning products to capture what customers actually accomplish rather than what they click, deconstructing products into modular capabilities the intelligence layer can assemble dynamically (not a predetermined “Enterprise Analytics Suite” but atomic components like ingestion, visualization, alerting, and anomaly detection that get composed per customer), and rebuilding culture around edge autonomy where individuals act on judgment without waiting for approval chains.

Here is why these gaps are structural, not solvable by better execution.

The first gap is data integrity, and it is an economic problem before it is a cultural one.

Dorsey’s model requires two forms of proprietary signal. Internally, a company world model built from digital artifacts, every Slack message, code commit, design document, and decision record the company produces. Externally, a customer world model built from behavioral data rich enough to predict what customers need before they ask. Block claims a two sided data advantage because it sees the buyer through Cash App and the seller through Square. But analysts have noted that the actual overlap is small. Most Cash App users do not shop at Square merchants, and most Square merchants do not have customers paying through Cash App. The fully integrated signal Dorsey describes remains aspirational.

The internal model faces a deeper challenge. At Acme, the most consequential organizational knowledge exists as unstructured contextual variables that resist digitization, not because they are mysterious but because the cost of capturing them exceeds the value the model could extract. When Acme’s sales director reads a prospect’s CTO as evasive about timelines and interprets this as a buying signal rather than an objection, that judgment synthesizes tone of voice, historical patterns across dozens of similar deals, awareness of the prospect’s internal politics gleaned from a conference conversation eighteen months ago, and an intuitive weighting of which factors matter most in this specific configuration. In principle, every one of these inputs could be digitized. In practice, they are high variance, low frequency signals whose capture cost per useful data point would be orders of magnitude higher than the value the world model could extract. This is not a technology limitation waiting to be solved. It is a permanent feature of how organizational knowledge works. The highest value signals in any company are precisely the ones that are most expensive to digitize, because their value comes from their rarity, their context dependence, and their entanglement with specific human relationships.

Even the cultural transformation required to make routine work machine readable is a multi year organizational redesign, because it requires changing how every person communicates, collaborates, and builds professional identity. Managers who built their reputations on running effective meetings are being told meetings are the problem. Engineers who solve problems by walking to a colleague’s desk are being told to slow down and write. Sales teams built on phone calls and relationship selling are being told to put everything in a thread. Each of these changes meets resistance not because people are irrational but because the existing behaviors work. Zappos lost 30% of its workforce attempting a comparable cultural shift and eventually reintroduced managers under different titles. Google eliminated engineering managers in 2002 and reversed course within months.

The customer side model faces its own version. The contrast between Block and Acme illustrates it precisely. When Block sees a merchant’s transaction volume declining over three weeks while loan repayment holds steady, the system infers a cash flow squeeze and offers a bridge loan. For Acme to match this, it would need to know that a customer’s analyst used Acme’s anomaly detection tool, presented the finding to their CFO, and the company avoided a $2 million inventory write down. Acme has no mechanism to capture that downstream outcome, and building one is a design problem, not a coding problem. How do you capture what a human did with an insight in a way that is accurate, non burdensome, and rich enough to feed a predictive model? Nobody has solved this for enterprise software.

The second gap is the erosion of social capital, and it is the one whose consequences show up first with customers and partners.

Ronald Burt’s work on structural holes establishes that individuals who bridge disconnected groups within and between organizations create disproportionate value through brokerage. They transfer tacit knowledge, build trust across boundaries, and enable coordination that formal structures cannot achieve. These brokers are the highest value nodes in any organizational network. They are also precisely the people Dorsey proposes to eliminate.

Start with what customers will see. Acme’s largest government customer has a CTO who has worked with Acme’s VP of Engineering for six years, through three contract renegotiations, two security incidents, and a change of administration. That relationship protects tens of millions in annual contract value. It exists because two specific human beings have shared enough difficult experiences to trust each other’s judgment. When the Dorsey model reclassifies Acme’s VP of Engineering as a player coach or a DRI with a rotating assignment, the government CTO does not get a seamless handoff. He gets a stranger with access to a world model. The world model can tell the stranger everything that has happened in the account. It cannot tell the stranger that this particular CTO values directness over diplomacy, that he needs to hear the bad news first, that the relationship survived the second security incident only because Acme’s VP flew to Washington and spent two days onsite demonstrating accountability in person. The CTO will notice the difference. And when the contract comes up for renewal, the CTO will take the call from the competitor whose leadership structure still includes someone he trusts.

Now consider what partners will do. Acme’s VP of Partnerships has spent eight years building relationships with counterparts at three major channel partners. Those relationships generate tens of millions in annual revenue. They were built through conferences, late night calls when deals were falling apart, and years of demonstrated reliability. If Acme flattens its structure and that VP is reclassified or leaves, those relationships do not transfer to a DRI with a 90 day assignment. They evaporate. And the partners, who have their own competitive dynamics and their own relationship driven cultures, will redirect their energy toward vendors whose organizational structures still include someone they know and trust. Salesforce’s partnership ecosystem generates nearly three times the company’s direct revenue. That ecosystem was built by people who earned the trust of other people, not by algorithms that optimized for speed.

Internally, the functions Dorsey dismisses as coordination overhead are the primary mechanisms by which organizations develop the people who eventually run them. Research shows retention rates for mentored employees run 41 to 50 percent higher, and mentored employees are five times more likely to be promoted. A player coach who is simultaneously writing code and designing interfaces will not have the bandwidth to notice that a promising 26 year old engineer keeps making the same communication mistakes in code reviews and needs coaching, not a performance flag. That noticing, that human attentiveness to another person’s development, is one of the most valuable things a good manager does. It is also one of the least documentable and least automatable.

And there is a set of management functions that are not information problems at all. They are commitment problems. Who terminates an underperforming employee with dignity and care? Who thanks someone for exceptional work in a way that makes them feel genuinely seen by the institution they have given years to? Who sits with someone after a project failure and helps them find the learning rather than assigning blame? Who advocates for a quiet contributor whose work deserves visibility but who will never self promote? These acts of institutional commitment are what make a company a place where people are willing to take risks, absorb setbacks, and invest the discretionary effort that distinguishes a functional organization from a great one. The essay treats them as friction. They are the load bearing walls.

The third gap is the accountability paradox, which reveals that the model is not just difficult to implement but structurally fragile regardless of how good the company is.

Middle managers function as variance dampeners. They absorb the noise that accompanies complex operations, distinguishing signal from artifact, catching errors before they propagate, and applying institutional context that converts raw data into actionable judgment. In a traditional hierarchy, a flawed analysis from a junior analyst gets reviewed by a manager who notices the data set excluded Q4, which skews the trend line. The error is caught. The decision it would have informed is corrected. In the Dorsey model, where the intelligence layer acts on analysis autonomously, the error propagates directly into a customer facing action.

This creates a specific and dangerous form of fragility. When the AI driven intelligence layer makes a decision, the data environment changes as a result of that decision. The AI then reads the changed data and makes a subsequent decision based on it. Without human managers applying judgment about whether the data shift reflects genuine reality or is an artifact of the system’s own prior action, the model risks entering a recursive optimization loop, a feedback spiral in which each decision degrades the data environment for the next. This is not a hallucination problem. It is a systems dynamics problem, and it is inherent to any architecture that removes the human judgment layer between automated analysis and consequential action.

Consider the decisions Acme’s middle management handles quarterly. Whether to kill a product line that still generates revenue. Whether to walk away from a major customer whose demands distort the roadmap. Whether to choose between two qualified candidates for a role when the decision will demoralize the one passed over. None of these are information problems. All require weighing competing interests, navigating political terrain, and accepting personal accountability for outcomes that will not be clear for years. A 28 year old DRI with a 90 day assignment lacks the institutional standing to make these calls. A world model cannot make them because they are fundamentally about values and tradeoffs, not data.

Chris Savage, CEO of Wistia, reflected publicly that his company’s flat structure had done the opposite of what he intended, centralizing all decision making and creating “a secret implicit structure.” Kim Scott has observed that flattened organizations increase rather than decrease the return on political behavior. The meritocracy flat structures promise almost never materializes. What materializes instead is a political landscape that is harder to see, harder to challenge, and harder to hold accountable than the hierarchy it replaced.

The evidence is no longer theoretical. The wave of AI attributed workforce reductions in 2025 produced a measurable counter phenomenon. The Great Flattening of 2025 has become the Great Rehiring of 2026, as companies discovered that deleting their human coordination layer meant deleting the institutional memory, relationship capital, and distributed judgment that the layer was quietly providing. A Careerminds survey of 600 HR professionals found that two thirds of companies that conducted AI driven layoffs had already begun rehiring, more than half within six months. Nearly a third reported losing critical skills and institutional memory they could not replace. Only 8% said the restructuring delivered promised results without modification.

These three gaps are not implementation challenges. They are structural features of how organizations work. The Dorsey model requires all three to be absent simultaneously. No company meets that condition.

There is a better model. It begins with the same insight, that information flow drives speed and AI can accelerate it dramatically, but arrives at a fundamentally different architecture.

The structural distinction maps across seven dimensions.

On primary goal, the Dorsey model optimizes for velocity. The augmented model optimizes for resilience and judgment quality.

On middle management, the Dorsey model eliminates it as friction. The augmented model transforms it into an error correction and commitment layer.

On decision making, the Dorsey model pushes decisions to the edge, where individual contributors lack institutional standing and context. The augmented model anchors decisions in social capital and distributed accountability.

On data philosophy, the Dorsey model assumes deterministic behavioral signal. The augmented model treats organizational data as probabilistic and context dependent, requiring human interpretation to distinguish signal from artifact.

On risk profile, the Dorsey model concentrates fragility by removing the variance dampening layer between automated analysis and action. The augmented model distributes robustness through layered judgment that catches errors before they propagate.

On talent development, the Dorsey model assigns it to player coaches as a secondary function. The augmented model preserves it as a primary organizational capability performed by managers freed from information gathering to focus on people.

On external relationships, the Dorsey model treats them as interchangeable. The augmented model recognizes them as non transferable social capital that compounds through personal commitment over years.

In practice, this means the world model exists but serves managers rather than replacing them. At Acme, the VP of Engineering no longer spends Monday mornings in a two hour standup synthesizing updates from six team leads. The system surfaces the three things that need her judgment: the deployment behind schedule because of a dependency on a team that does not know about it, the senior engineer whose commit frequency dropped 40% this month, and the customer integration consuming twice the estimated hours. She spends her time deciding what to do about those things rather than discovering they exist. Her job shifts from gathering information to exercising the judgment, making the commitments, and maintaining the relationships that the system cannot.

The intelligence layer recommends rather than acts. When the system identifies that a customer’s usage pattern suggests they are outgrowing their current tier, it does not autonomously restructure the contract. It surfaces the insight to the account manager who knows that this customer’s CTO is in budget freeze until Q3, that the last vendor who pushed an upgrade during a freeze lost the account, and that the right move is a quiet conversation at next month’s industry conference rather than an automated proposal. The human provides judgment, accountability, and the institutional commitment the system cannot generate.

This is not incrementalism. It is a different theory of competitive advantage. The Dorsey model bets that speed, achieved by removing the human layer, is the primary source of value. The augmented model bets that AI driven information flow combined with human judgment, social capital, and accountability produces outcomes a purely automated system cannot match. The evidence from network theory, the empirical record of flat organizations at scale, and the 2026 rehiring data all favor the augmented model.

Three guardrails follow.

First, invest in behavioral signal before restructuring around it. If a company cannot articulate what its customers accomplish with its product, not what they click but what value they create, then no AI architecture will produce a useful customer world model. The investment in capturing downstream outcomes must precede the organizational redesign that depends on it.

Second, treat social capital as a balance sheet asset, not an overhead cost. Map the organizational network. Identify the brokers who bridge structural holes between teams, partners, and functions. Before removing any management layer, model what happens to the network when those nodes disappear. If the answer is fragmentation, the reorganization will destroy more value than it creates. And think specifically about what your customers and partners will see, feel, and do when the person they trust is no longer there.

Third, design for error absorption, not error elimination. Layered human judgment is the mechanism by which organizations absorb the inevitable failures of automated systems. Removing it in pursuit of speed creates brittleness that may not become visible until the first serious failure, at which point the damage will be structural.

The Dorsey essay asks the right question. What does your company understand that is genuinely hard to understand? But it arrives at the wrong answer about what to do with that understanding. Coordination is not merely an information problem. It is a commitment problem. The companies that will lead are not the ones that replace human judgment with AI. They are the ones that use AI to make human judgment faster, better informed, and more consequential, while preserving the commitments, the relationships, and the accountability that no world model can replicate.

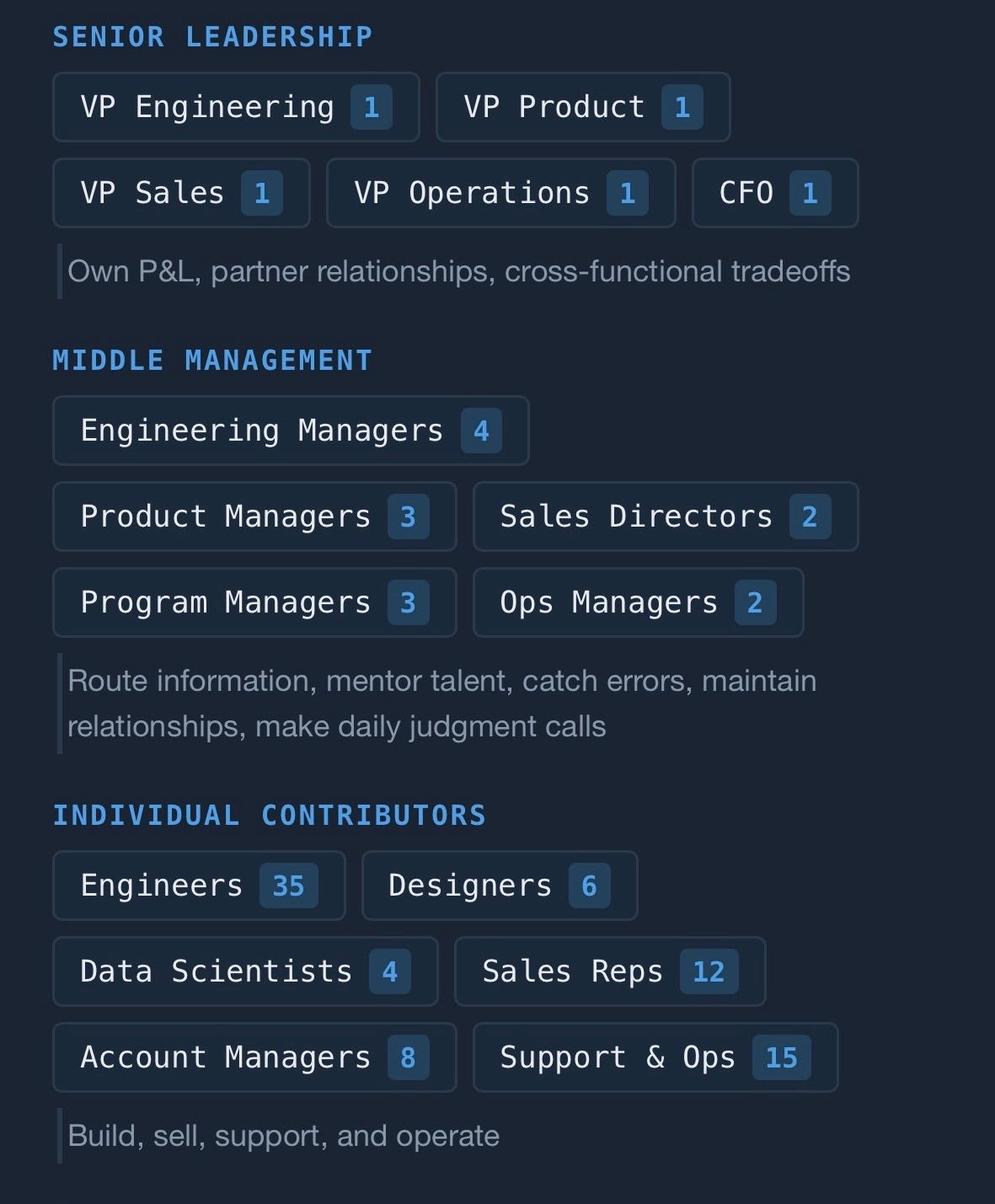

Original Organization:

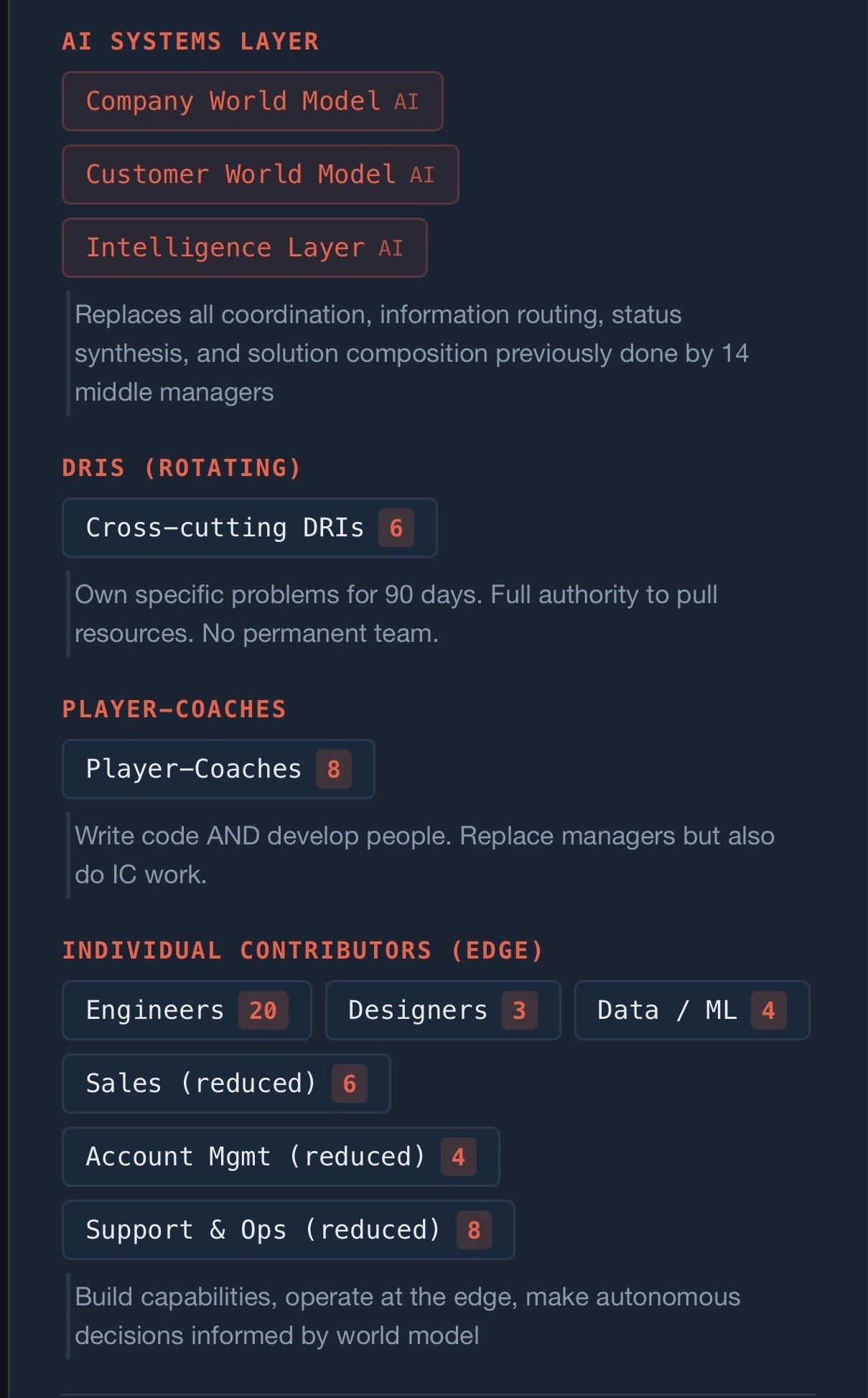

Jack’s Organization:

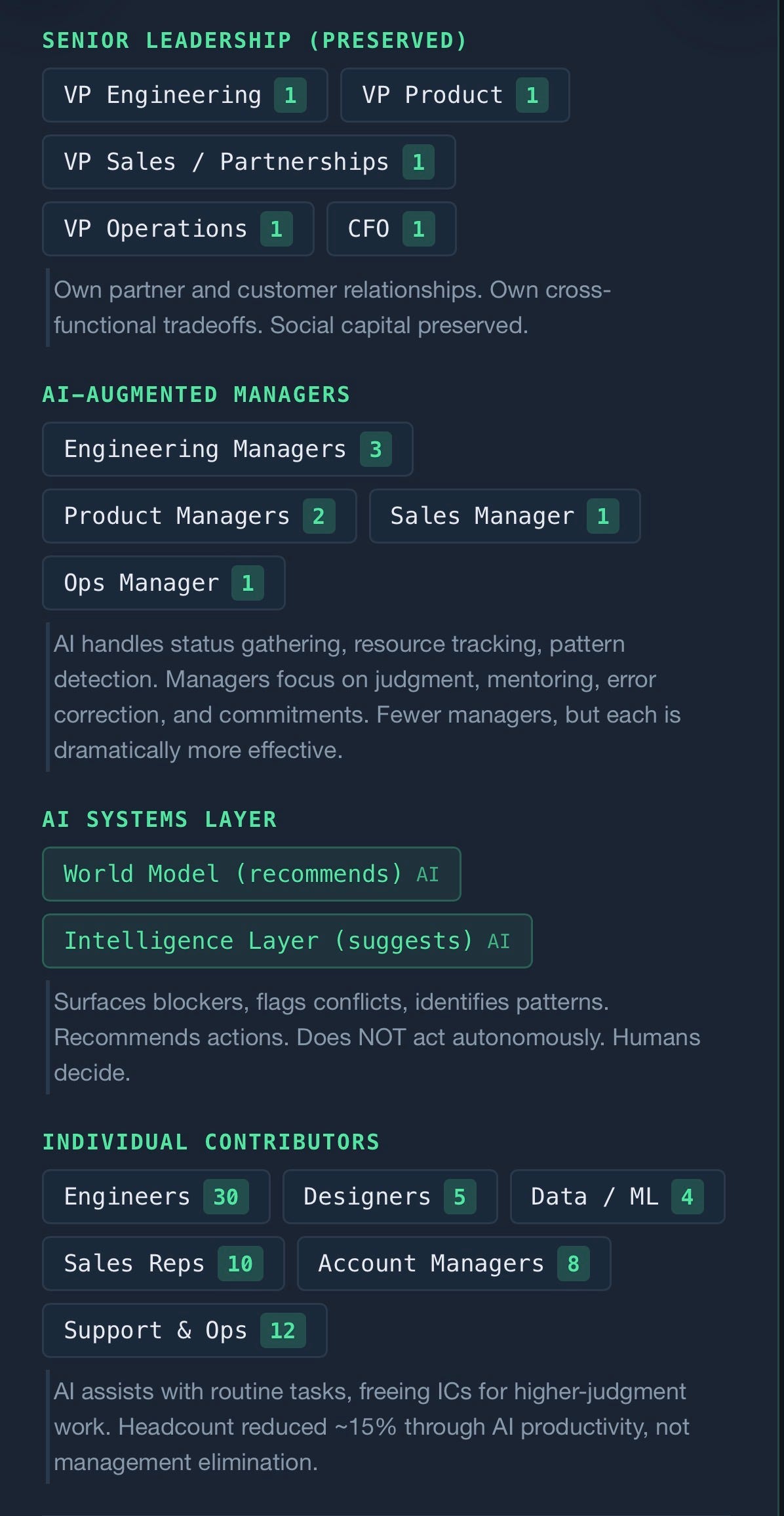

Proposed Organization: